MotionRiver

Universal Mocap Streamer

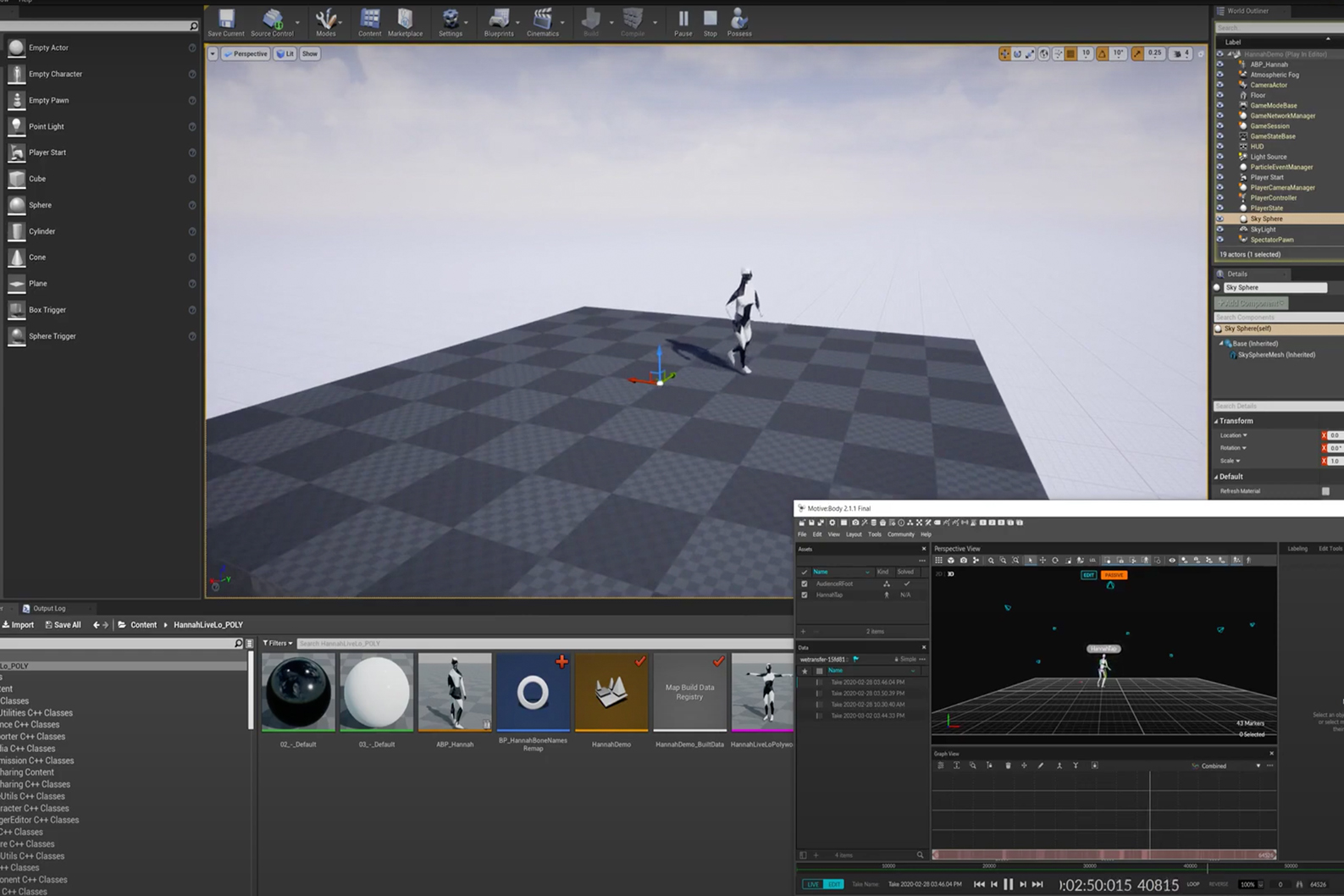

Imagine immersing yourself in a VR experience in the UK where all the characters are animated by live actors from Australia, or a dance video game that features real dancers captured in Mumbai. MotionRiver allows developers to create new types of responsive experience. From live immersive performances to interactive video games – the difference here is that instead of repeating the same programmatic or looping sequence, over and over, characters will be able to react and respond in a natural and realistic way – because they are controlled by real actors. MotionRiver is an innovative open-source software toolkit that inputs and outputs a wide variety of mocap data which it converts into a ‘universal’ format. Just as Android and iOS work on different phones, there are also different types of mocap data – and this tool will solve the compatibility problem – allowing more interconnectivity. The application streams data over the internet to remote computers running the application which can receive and use the motion data in the desired format.

The innovation stems from research questions relating to how to capture and disseminate liveness across digital platforms:

- How do we create new spaces for immersive performance to be made?

- How do we bridge the gap of distance and work within the new context of dislocation and confinement?

MotionRiver is a new tool that will enable greater accessibility of motion capture and innovate new methods of cultural and creative collaboration.

The MotionRiver tool is currently being used by Thayaht to develop DAZZLE. Artist Collectives Gibson/Martelli & Peut-Porter join forces to re-stage a Mixed-Reality version of the 1919 Chelsea Arts Club Dazzle Ball. After five years of war, and inspired by the naval dazzle-ship patterns, the original Ball applied zig-zag motifs to costumes and set design, playing with audiences vision and perception. It was a one-off event that focused the era’s artistic and social energies so intensely that it immediately spawned copycats in Washington DC and Sydney, and secured its place in today’s cultural history.

The Live VR experience offers participants the chance to enter the virtual world, invited to take part in choreographed sequences and improvisations with dancers. Here the dancers duet with the visitor, sensitively ‘listening’ to their responses and driving the interaction. Technically the participants wear backpack PCs, HMD’s and trackers on their hands and feet. This setup gives up to five participants an avatar body, enhancing the sense of presence in the virtual world. The two live performers wear specialised mocap suits enabling them to control ‘digital doubles’. Being together in the same tracked space means that the participants can see the virtual ‘doubles’ and in turn, the performers can respond to participants in realtime – extending touch out of and into the virtual space. Music and technology playfully encourage dance and interaction -performers and avatars blending with the audience in a live virtual reality performance.

Project Manager

Hardware

Vicon, Optitrack, PC, VR Headset

Team

- Bruno Martelli, Lead Artist, VR/ Mocap developer, THAYAHT

- Dan Tucker, Executive Producer, THAYAHT

- Simon Barrett, Software Developer, CEO/Co-founder, Coop Innovations

- Alex Counsell, Mocap Consultant, Research Fellow, School of Creative Technologies, University of Portsmouth

Partners

Cooperative Innovations – https://www.coopinnovations.co.uk

University of Portsmouth Motion Capture Studio – http://mocap.port.ac.uk

MotionRiver

Gallery